If you're still triggering every Claude Code run yourself, you've built an assistant. Not a system.

I know because I did the same thing for months. Built skills, ran pipelines, got solid outputs. Closed the terminal. Started again the next day.

The unlock wasn't better prompts. It was getting Claude Code to run without me.

Last week I typed four words into Slack. An 8-stage pipeline fired automatically: scraped LinkedIn engagers, qualified them against ICP filters, deduped against a 90-day window, enriched with company data, generated personalized sequences, pushed to Smartlead, and sent me a Slack notification when it was done. I was on a client call the entire time.

I've logged 500+ hours with Claude Code building GTM systems in production. Here's the architecture that makes that possible, and what it actually takes to get your skills compounding instead of just repeating.

Skills vs. Prompts: The Architecture That Travels

Before you can get Claude Code running without you, you need to understand what actually persists across sessions.

A prompt doesn't. A skill does.

Here's what I mean. If you drop into a terminal session and paste your ICP definition, your tone of voice guidelines, and your current offer details, that context lives in one session. The moment you close the terminal, it's gone. The next session starts from zero.

A skill is different. It's a file. It lives in .claude/skills/. It loads automatically when Claude Code starts. More importantly, when you bundle skills into a deployed agent, that context travels with the agent to wherever it runs: trigger.dev, Railway, a cron job, a webhook handler.

This is the architectural insight that changes everything.

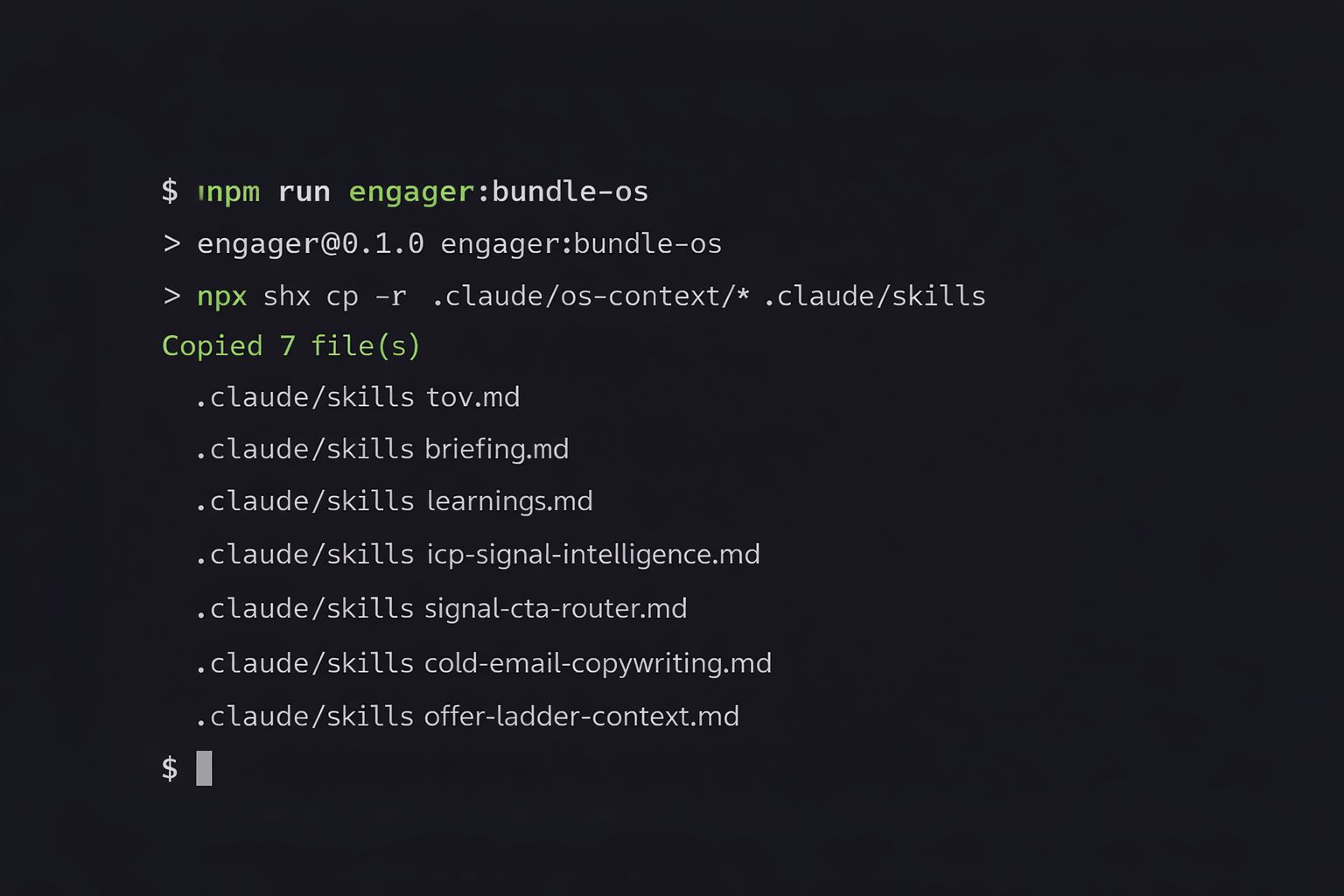

The post-engager pipeline I run has 7 skills bundled into its deployment directory: tov.md, briefing.md, learnings.md, icp-signal-intelligence.md, signal-cta-router.md, cold-email-copywriting.md, and offer-ladder-context.md. Every time trigger.dev fires the pipeline, all 7 files travel with it. The agent knows my ICP, my tone, my current offer, and what worked in the last 90 days of campaigns. No manual reload. No re-briefing. The context is baked into the deployment.

That's the foundation. Here's how you build on it.

The Two Deployment Patterns That Actually Work

There are two patterns I've found reliable for taking Claude Code agents out of your direct control. They serve different use cases.

Pattern 1: Event-Triggered Deployment via trigger.dev

This is for work that should happen in response to a specific event. Someone engaged with a LinkedIn post. A job signal fired. A new lead entered the pipeline.

My post-engager works like this: I type /engage <linkedin-post-url> in Slack. A Slack webhook fires. trigger.dev picks it up. An 8-stage pipeline runs automatically: scrape engagers from the post, qualify against ICP title filters, dedup against a 90-day window, enrich with company data, generate personalized email sequences, save to Supabase, push to Smartlead, notify Slack with results.

I'm not in any of those steps.

The pipeline pushes to a dedicated campaign running on 50 inboxes at roughly 100 emails per day. By the time I glance at Slack again, there's a notification showing how many qualified contacts from that post moved into active outreach.

The key to making this work: the pipeline needs a defined scope. 8 specific steps. Deterministic inputs. Predictable outputs. Claude Code is not improvising in the middle. It's executing a documented process with OS-level context loaded at the start of every run.

One more thing. Before every deployment, run the bundle step. For me that's npm run engager:bundle-os. It copies the OS files into the deployment directory so the context travels with the agent. Skip this step and the deployed agent runs blind.

Pattern 2: Scheduled Heartbeat via launchd

This is for oversight work that should run on a cadence, regardless of specific events.

The pattern: launchd fires a shell script every 90 minutes. The script first queries the Notion task queue. If there are zero actionable tasks, it exits immediately. No Claude session launched, no compute spent, no noise in the logs. If there are tasks, it launches claude -p with the master prompt, lets the session run, and logs everything to a local log file.

The pre-check is load-bearing. Without it, you'd be launching Claude sessions constantly against an empty queue. With it, compute gets spent only when there's actual work.

This pattern runs 30 agents across client contexts, internal operations, and system health. The daily digest hits Notion at 8:30am. I review it when I want to. The system doesn't wait for me to be available.

If this sounds familiar, you may have seen Paperclip (paperclip.ing). It's a open-source project that hit 43K GitHub stars in under six weeks that formalizes the exact same pattern: agents wake on a schedule, check for assigned work, execute with persistent context, and sleep. They call it heartbeat scheduling. The core mechanics are identical. I built mine with launchd and Notion before Paperclip existed, but if you're starting from scratch today, Paperclip gives you the orchestration layer out of the box: org charts, budgets, task queues, and multi-company isolation. The pattern is the same. Paperclip just packages it.

What "Compounding" Actually Means in Practice

Here's where most people get the architecture wrong.

They build a skill that generates good output. They run it a few times. It works. They think they're done.

But the skill isn't compounding yet. It's repeating. Repetition and compounding are not the same thing.

Compounding requires a feedback loop.

In my pipeline, the feedback loop is learnings.md. After every campaign debrief, specific entries get appended: what copy patterns drove replies, which hooks got ignored, which CTAs converted, what company profiles the system misclassified as ICP when they weren't. Those entries are part of the OS file bundle. Every subsequent run loads them.

The effect accumulates. The copy the post-engager generates six months into deployment is meaningfully sharper than what it generated at launch. Not because I rewrote the prompt. Because the learnings file accumulated real-world signal and the skill reads it at the start of every run.

Without the feedback loop, you have a skill that runs autonomously but doesn't get smarter. That's better than nothing. It's not what compounding looks like.

The three components you need:

Skills that travel with OS-level context: your ICP, tone of voice, current offer

A deployment target that removes you from the runtime: trigger.dev, Railway, launchd

A feedback file that gets updated from real results and reloaded on the next run

All three together. Skip one and the system plateaus.

What Breaks (The Architecture to Avoid)

I've broken this system in two specific ways. Here's what to watch for.

Interactive session patterns. If your skill assumes you'll be there to redirect the agent, review output mid-run, or answer follow-up questions, it can't run autonomously. The pipeline needs to be self-contained. Input goes in, output comes out, no human required between stages.

In practice, this means pre-loading all context in the skills files before deploy. It means defining clear data contracts between pipeline stages so each step knows exactly what it's receiving. And it means using deterministic QA gates instead of human review at each step. My pipeline checks word count, banned phrases, and required CTA presence on every output. The system catches its own errors without me watching.

Skills without the bundle step. A skill that works perfectly in your local Claude Code session can break when deployed to trigger.dev if it references files that don't travel with it.

Before every deployment, run whatever command copies your OS files into the deployment directory. For me: npm run engager:bundle-os. This is not optional. Context has to travel with the agent or the agent is just a generic LLM call with no knowledge of your business.

This is the difference between a skill that compounds locally and a skill that compounds in production.

The Buyer Question, Applied to Yourself

Every client I work with is asking some version of the same question: "Who can make this machine work without me babysitting it?"

That question applies to your own GTM infrastructure too.

If you're manually triggering every pipeline run, reviewing every output before it ships, and re-briefing Claude Code every time you open a terminal, you have an assistant. Not a system.

The architecture that gets you out of the runtime isn't complicated. A deployment target for event-driven work. A scheduled pattern for oversight work. OS files that travel with every deployment. A feedback loop that updates from real results and reloads on the next run. QA gates so the system catches its own errors.

Build it once. It runs while you're on calls, in client meetings, or offline for the night.

If you want to build GTM infrastructure that runs without you in every workflow

Until next week,

The GTM Architects