The $1.50 GTM Engine

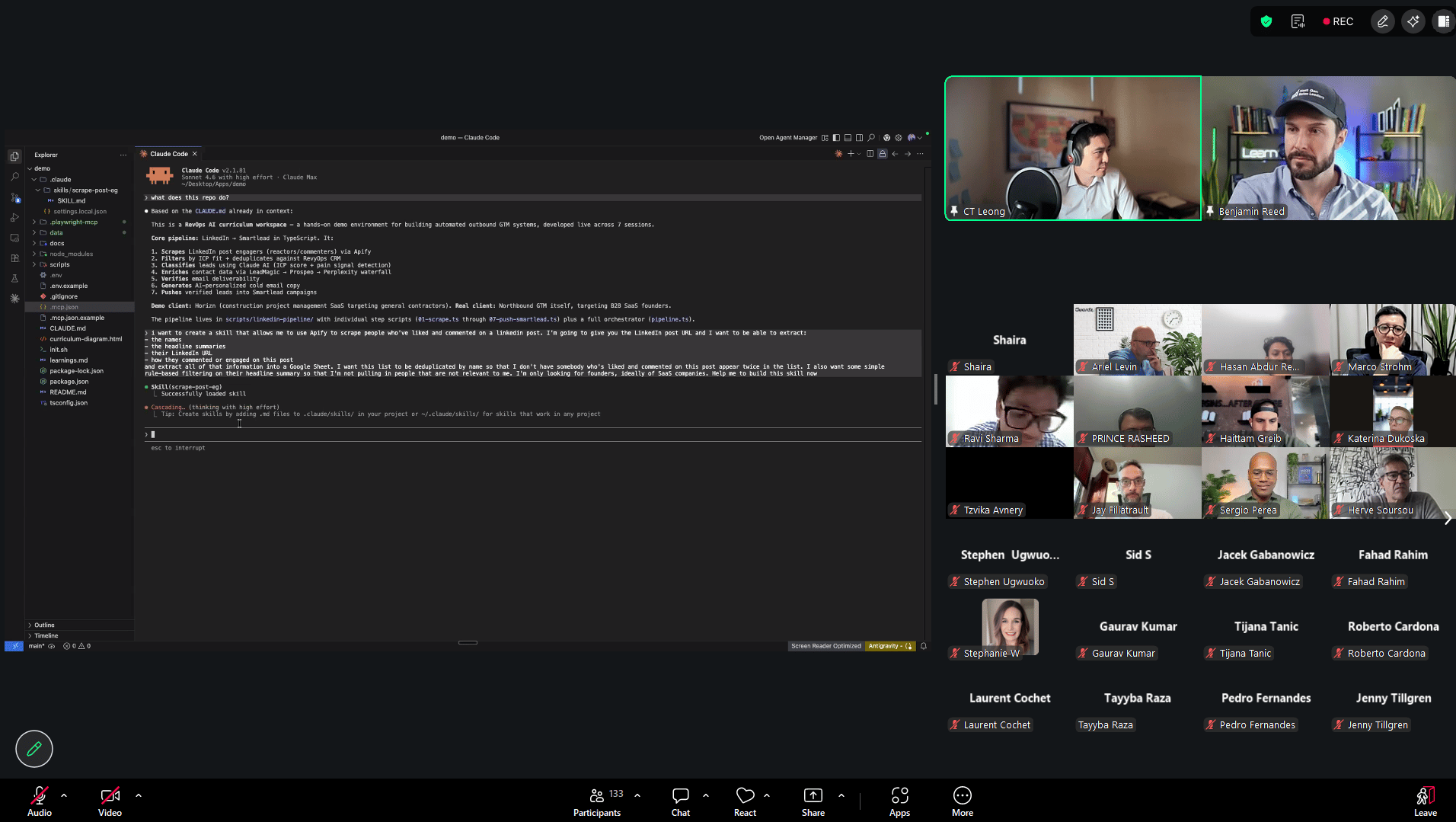

I run my entire GTM motion out of a terminal. Signal detection, outbound copy, prospect research, sales prep, content distribution. Seven Claude Code skills. Total cost for a full pipeline run: $1.50 in API calls.

This is not a demo. These skills run daily across live client work and my own outbound. They're the reason I can operate a full GTM motion without a team, an agency, or a $5K/month tool stack.

Here's the exact architecture.

Why Skills, Not Prompts

Most people use Claude Code like a chatbot. Type a prompt, get an answer, lose everything when the session ends. That's the hotel room. You check in, it's clean, you leave, and you start over.

Skills turn Claude Code into an apartment. You move in. Your ICP definition lives in CLAUDE.md. Your copy frameworks live in skill files. Your scoring models, enrichment waterfalls, campaign configs: all loaded automatically every time you open the terminal.

A skill is a SKILL.md file that encodes a specific workflow. Not a prompt. A methodology. It tells Claude how to think about a problem, what steps to follow, what quality checks to run, and what output format to produce. Build seven of them and your GTM runs from the terminal without you explaining context every session.

The compounding effect is the real unlock. Every campaign run, every prospect researched, every email written adds data back into the system. The skills get smarter because the context they operate on gets richer.

Here are the seven.

Skills 1 & 2: The Signal Layer

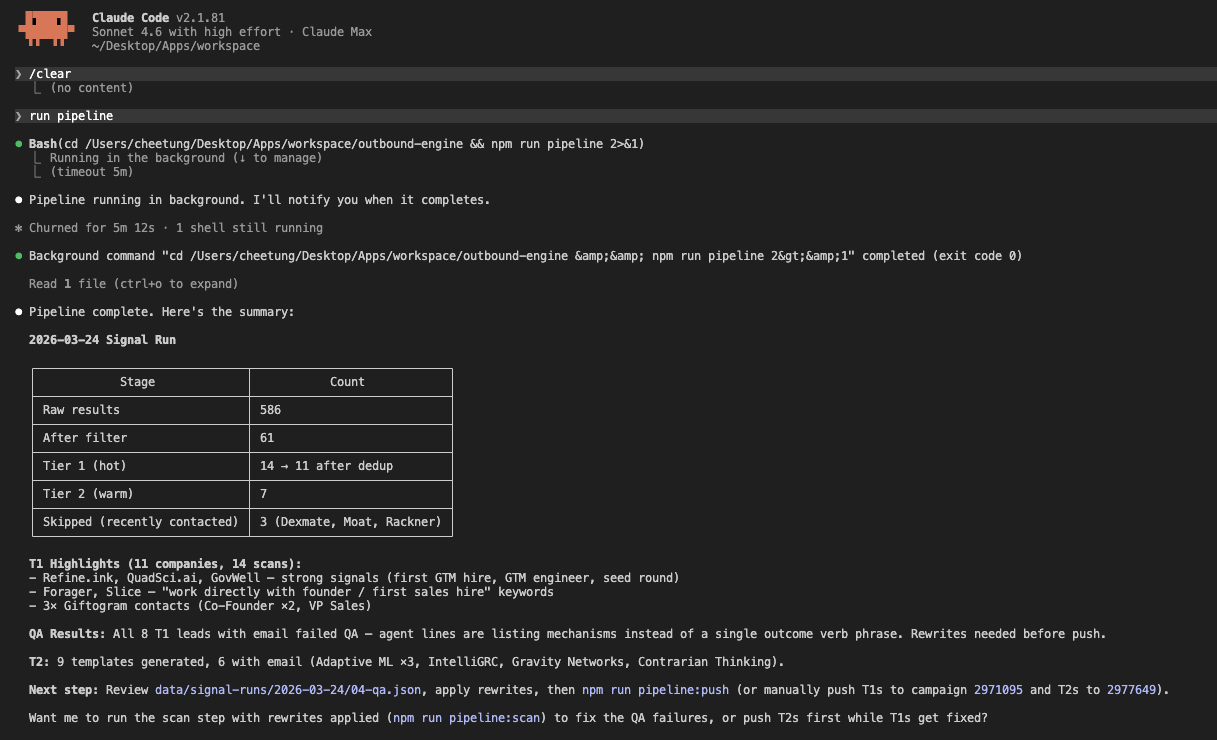

Skill 1: Signal Pipeline catches job postings for first GTM hire signals (SDR, AE, Head of Sales/Marketing) from Ashby, Greenhouse, Lever, and LinkedIn via Trigify.io listeners and Apify scrapers. GPT-4o classifies each into T1 (hot: founder is hiring their first GTM person) or T2 (warm: expanding an existing team). Then it deduplicates against a 90-day window in Supabase, enriches through an Apollo-to-Exa waterfall, verifies emails through BounceBan, and scores by ICP fit.

The output: a scored, enriched contact list ready for copy generation. One command. 142 contacts in the last run. 22 minutes end-to-end.

Skill 2: Cold Email Copywriting takes that scored list and generates personalized 3-email sequences plus a LinkedIn DM for each contact. T1 contacts get "agent framing" in the opener: a specific line about the system we built that solves the exact pain their job posting reveals. T2 contacts get a lighter touch.

The copy skill encodes frameworks tested across thousands of cold emails: pattern interrupts in E1, pain escalation in E2, soft CTA in E3. No mail merge variables. Each sequence reads like it was written for one person, because the enrichment data makes it specific enough to feel that way.

Total cost for the signal layer: $1.50 per pipeline run. The MCP/API calls across Trigify, Apify, Exa, email verification, and GPT-4o add up to less than a coffee.

Skills 3 & 4: The Conversion Layer

Skill 3: GTM Snapshot is the conversion weapon. When a warm prospect replies or shows genuine curiosity on LinkedIn, I run this skill. It pulls company data from Apollo (the gold is technology_names), runs three parallel Perplexity searches (jobs, news, reviews), scrapes their website with Playwright, and synthesizes everything into a 1-page deliverable.

The output: 2-3 specific, dollar-quantified findings about their GTM. Real examples from past snapshots: "$267K in quoted pipeline with zero follow-up" and "a dead form stealing 30% of clicks from the real CTA." Each finding maps to a system that fixes it.

I don't name the deliverable in outreach. I just say "I spent 20 minutes on your site." The iceberg effect does the selling. They see three findings and wonder what else I found. That books the call.

Skill 4: Pre-Call Audit runs 15 minutes before every discovery call. It pulls the prospect's signal data, enrichment history, any prior Snapshot findings, and structures a call brief: what they're likely feeling, what the three most probable pain points are, and which of the four GTM build areas (outbound, content, social/SEO, paid ads) is the highest-leverage first move.

I show up knowing more about their GTM than they expect. That changes the dynamic from "pitch" to "let me show you what I already found."

Skills 5 & 6: The Content + Engagement Layer

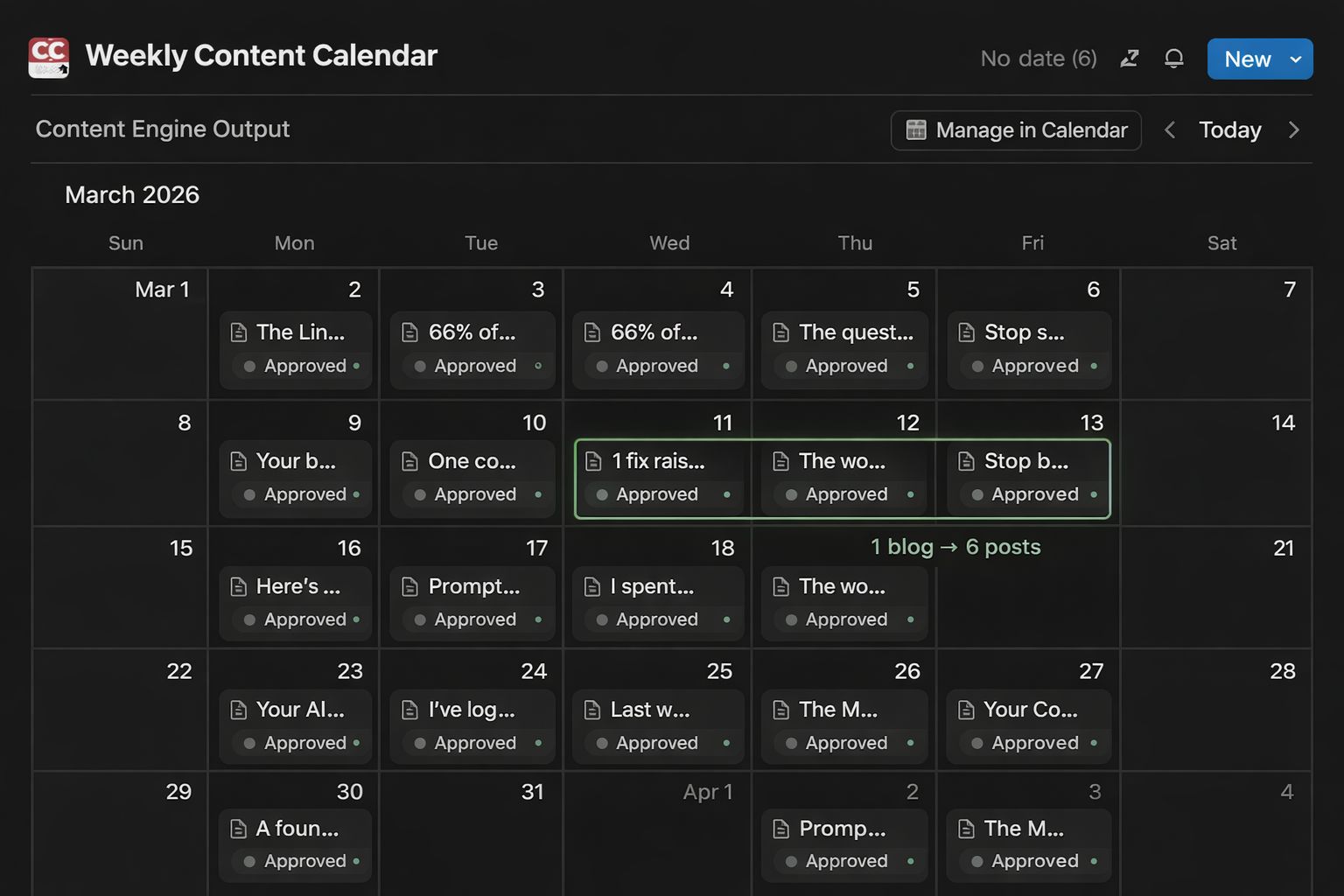

Skill 5: Weekly Content Engine runs every week. Step 0 scrapes my recent LinkedIn posts and 1,050 posts from 14 GTM creators, syncs engagement data to Notion, and generates a learning brief (what's working, what's not, which topics are trending). Step 1 researches and presents 3 topic candidates ranked by engagement data. Step 2 writes a 1,200-1,500 word blog post using direct-response copy frameworks. Step 3 atomizes into 6 LinkedIn posts distributed over 4 weeks. Step 4 pushes everything to a Notion content calendar.

One session. One blog post. Six LinkedIn posts. All scheduled with dates, formats, and pillar tags. The blog you're reading right now was written this way.

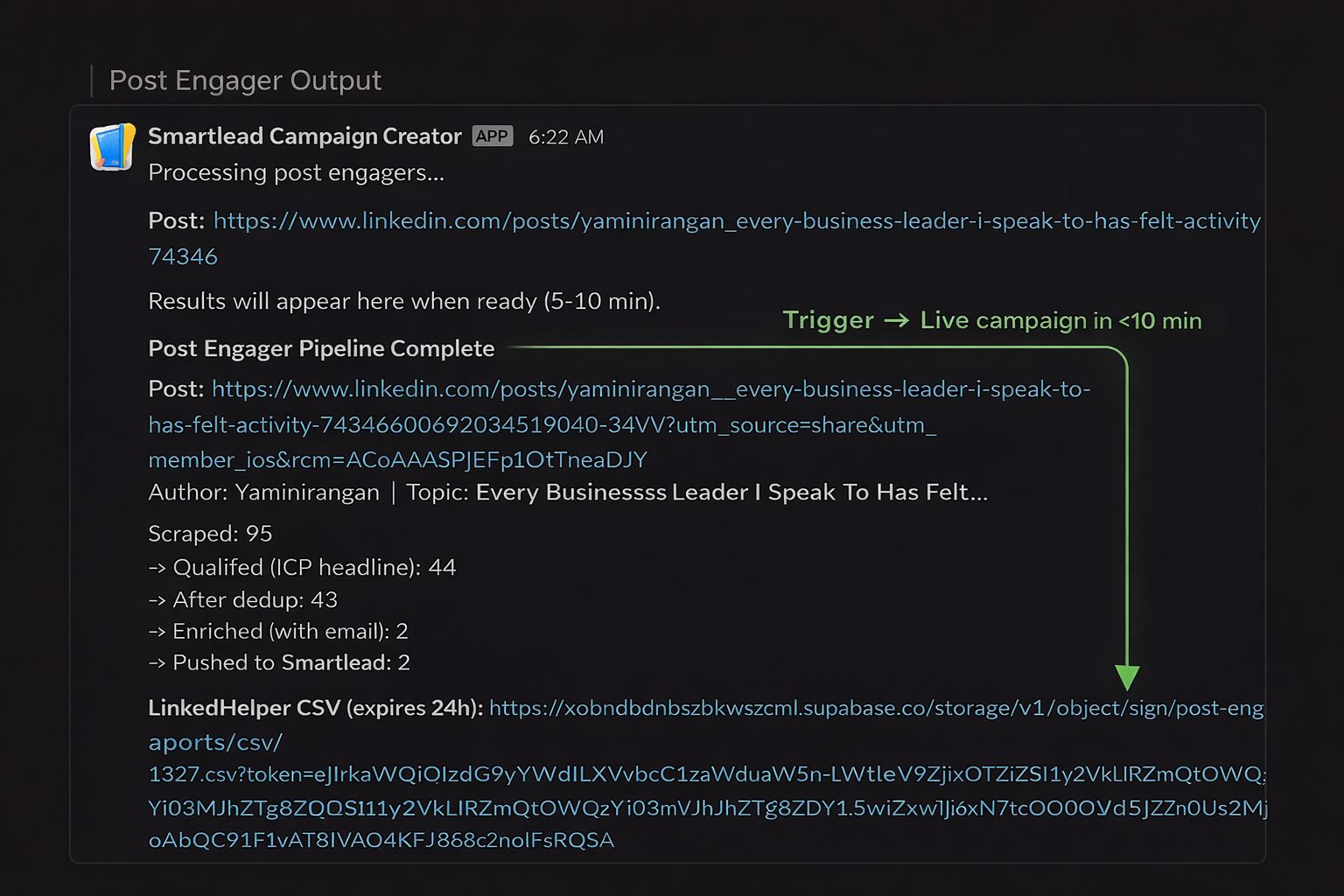

Skill 6: Post Engager is on-demand. When I spot a relevant LinkedIn post from an ICP-adjacent account, I type /engage <url> in Slack. An n8n workflow triggers a Railway service, which scrapes every person who liked or commented, qualifies them against an ICP headline filter, deduplicates against existing contacts, enriches, generates "Variant B" copy that references the specific post and what they said about it, and pushes to a dedicated Smartlead campaign. A LinkedHelper CSV drops back into Slack for LinkedIn connection requests.

From Slack command to live campaign: under 10 minutes.

Skill 7: The Routing Intelligence

Skill 7: Signal CTA Router is the glue. It maps signal type to copy angle. A job posting signal gets a different E1 opener than a post engagement signal. A company in the outbound GTM area gets different framing than one in the content area.

This skill also handles the "agent framing" injection: when the signal indicates the prospect would respond to "we built an agent that does X," the router injects that line into the E1 body and the LinkedIn DM opener. When the signal is softer, it defaults to proof-of-competence framing instead.

Without the router, every contact would get the same copy template with different variables. With it, the copy adjusts to their specific situation. Because the system understood the signal before it wrote a word.

The Flywheel

None of these skills work in isolation. The content engine (Skill 5) surfaces which topics resonate with the ICP. That feeds back into how the signal pipeline (Skill 1) scores relevance. The GTM Snapshot data (Skill 3) improves discovery calls, which reveal new pain patterns, which sharpen the copy frameworks in Skill 2. Each cycle gets tighter.

This is what "compounding" actually means in a GTM context. Not a metaphor. Data flowing between systems, making each one more precise.

What to Build Monday Morning

Pick the skill that addresses your biggest bottleneck right now.

No pipeline? Start with the signal layer. Build the scraper, the classifier, and the copy generator. You'll have outbound running in a week.

Pipeline exists but deals go cold? Build the GTM Snapshot. Show up to every conversation with 2-3 dollar-quantified findings about their business. The close rate changes.

Invisible on LinkedIn? Build the content engine. One blog, six posts, scheduled for a month. Run it weekly and the compounding effect kicks in by month two.

You don't need all seven on day one. You need one that works, then the next one that plugs the gap the first one reveals.

If your GTM is held together with founder duct tape and you're tired of being in every workflow, book a 25-min discovery call and I'll walk you through which skill to build first.

Until next week,

The GTM Architects