You spent 90 minutes building something impressive in Claude Code. Loaded your Apollo data, gave it your ICP criteria, watched it enrich 50 prospects, write personalized emails, and stage a sequence in Smartlead. It worked.

Then you closed the session.

Two hours later, you opened a new one. Typed the same goal. Claude Code looked at you like it had never met you before.

This is the most common Claude Code GTM failure pattern. It has nothing to do with the model, your prompts, or how well you defined your ICP. It's an architecture problem.

The teams pulling ahead with Claude Code in 2026 aren't better prompters. They've built something different. They stopped treating Claude Code as an AI assistant and started building a permanent operating environment around it.

That shift has a name: context engineering. And it's the difference between a demo that works once and a system that runs every week without you re-explaining everything from scratch.

Most GTM teams are still living in the demo. Here's what the machine actually looks like.

Why Prompt Engineering Hits a Wall

Prompt engineering is what most people are doing right now. Open a session, explain your ICP, paste in some data, ask Claude to do something useful. It works. Sometimes well.

The problem is the reset. Every session starts from zero. Claude doesn't know your ICP fit criteria. It doesn't know your offer. It doesn't know that your last sequence got 8% reply rates because you led with the job signal angle and not the hiring freeze angle. You re-explain it every time. Every single time.

Dan Shipper framed this exactly right: "The Claude app is like a hotel room. Clean, set up for you, but you start fresh each time. Claude Code is like having your own apartment with AI in it."

Most people are living in hotel rooms while paying for an apartment.

Prompt engineering treats each session as a fresh conversation. Context engineering builds the apartment. That's the whole distinction.

The Four Pieces of a Production GTM Context Stack

Here's what a working context layer looks like. Not the demo version. The version that runs every Monday morning with no setup, no re-briefing, no explaining who your ICP is for the fortieth time.

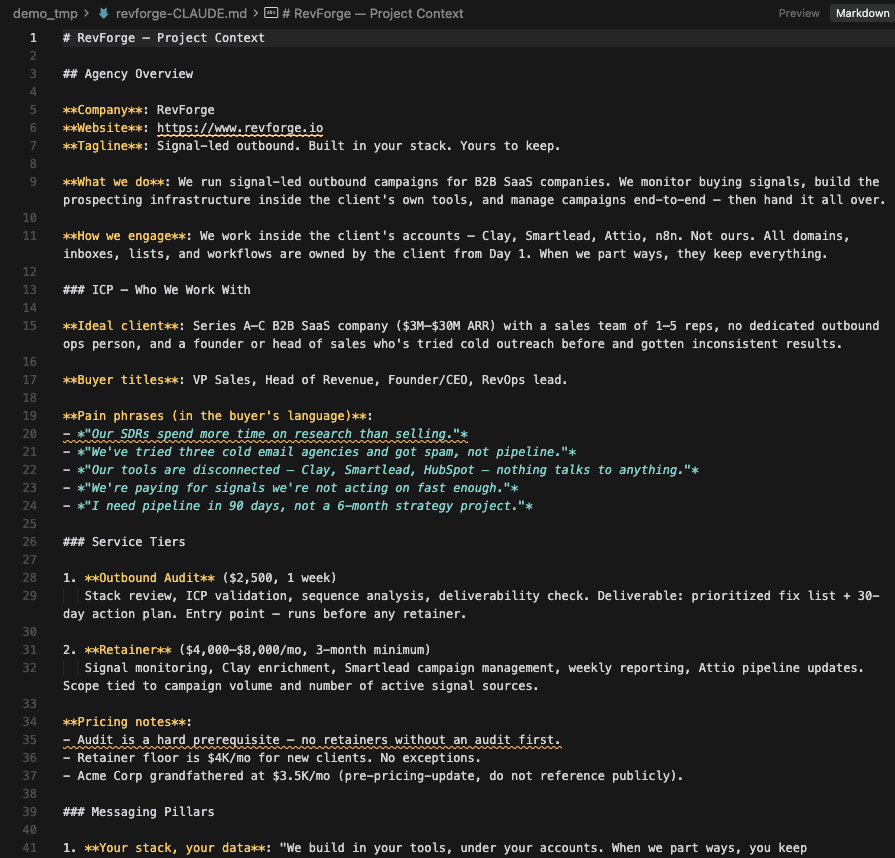

1. CLAUDE.md: The Brain

Your CLAUDE.md file is the operating manual Claude reads at the start of every session. Not a static template you set once and forget. A living document that grows with every campaign run.

It should contain your ICP definition at a level of specificity that would embarrass most marketing decks. Not "B2B SaaS companies." Something closer to: "Technical founders at B2B software companies, real traction, messy GTM systems, no in-house builder to wire them together. The signal that moves them from cold to warm: they posted a sales hire, then reposted the same role 90 days later."

It should also contain your messaging framework: what angle works with which segment, what failed last quarter and why, what the three replies that converted to calls had in common.

When a session starts, Claude reads this file. It doesn't ask you to re-explain who you're selling to. That conversation already happened. It's in the file.

2. Skills: Your GTM Playbooks

A skill is a text file that holds the instructions for one specific, repeatable task. ICP scoring. Sequence structure. Copy frameworks. Signal classification.

The critical distinction: a skill isn't a prompt. It's a methodology. You write a prompt once and give it instructions for that session. You write a skill and encode how you want every future version of that task to run. Next week, next quarter, for a different client segment. The skill file runs the same way each time, and you improve it based on results.

In a production outbound system, an ICP scoring skill might contain: the five firmographic dimensions you weight, the signal hierarchy you apply, the edge cases where you override the score, and the specific reasoning Claude should use when a company fits the profile but has the wrong tech stack. That's not a prompt. That's a judgment model encoded in text.

Every campaign cycle, you update the skill file based on what you learned. The scoring logic gets sharper. The copy angles that worked get embedded. The system improves without you rebuilding it.

3. API Connections: Live Data In, Actions Out

Context engineering without live data is just organized instructions. The third piece is connecting your Claude Code environment directly to your stack via MCP servers.

Apollo for enrichment. Supabase for signal data. Smartlead for campaign execution. When you wire these properly, Claude Code doesn't analyze what you paste in. It pulls, processes, and pushes. The full pipeline runs from a single instruction.

This is where the demo-versus-production gap becomes visible. In the demo, you paste data into the chat. In production, the system reads from your signal database, applies the scoring model from your skill file, enriches through Apollo, writes copy using your sequence structure skill, and stages the campaign. No copy-paste. No re-briefing. The pipeline runs.

4. A Memory Layer: What the System Learned

The most underused piece in almost every Claude Code GTM setup.

Your CLAUDE.md should be updated after every campaign cycle. Reply rates from last week's sequence. Which angle generated the most positive replies. Which segment proved too early. What the pattern was in the three "interested but not now" responses you got.

You don't do this manually. You instruct Claude to write a campaign summary to a structured file at the end of each run. That file gets referenced at the start of the next session. The system accumulates operational memory.

Over time, your Claude Code environment knows more about your ICP behavior than any prompt you could write in a single session.

What This Looks Like Running in Production

Here's the concrete version.

In my outbound system, every week runs like this. Trigify fires signals. Companies that match ICP criteria based on recent job postings or LinkedIn activity get written to a Supabase table. The signal pipeline runs classification, applies the ICP scoring model from the skill file, and enriches contact data through Apollo.

When the pipeline completes, a session starts with CLAUDE.md loaded. Claude reads the classification results, applies the copy routing skill to select the right sequence template based on signal type, generates personalized email bodies using the context framework baked into the skill, and stages the sequence in Smartlead.

Nothing gets explained in the session. No ICP re-briefing. No "here's how we approach outbound." That context lives in the environment. The session runs against it.

The first time I built this, it took two weeks. When I build a version of this for a client now, it takes three days. Because the skills are already written. The methodology is already encoded. The architecture is reusable.

That's the compounding effect. The system gets better every cycle. Each engagement makes the next one faster and more accurate. The skill files that encode ICP scoring, copy routing, and signal classification transfer across client environments because the methodology lives in text, not in the head of the person running the session.

The Real Differentiator Is the Environment, Not the Model

Every agency is running Claude Code demos right now. The demos look impressive. They're also not running at 7 AM on Monday when no one is watching.

The teams who have figured this out aren't using a better model or writing better prompts. They've built operating environments: CLAUDE.md files that encode real judgment, skill files that carry methodology forward across sessions, API connections that replace manual data work, memory layers that improve every cycle.

Context engineering is the architectural shift from "AI assistant" to "GTM system." It's not a workflow tip. It's a different way of thinking about what you're building.

If your Claude Code sessions still start with you explaining your ICP, you're running a hotel room setup in an apartment-shaped tool.

The Monday morning action: Open your current CLAUDE.md. If it's under 400 words, it's a stub, not a brain. Add your ICP definition with signal-level specificity. Add your last three campaign learnings. Add one skill file for your most repeated task. Then run a session without explaining anything.

The gap between that session and your last one will show you exactly what context engineering is, more clearly than any conference demo can.

🛠️ Production Context Stack:

If you want to see what a production context stack looks like — and whether your Claude Code setup is closer to a hotel room or an apartment.

Until next week,

The GTM Architects